regularization machine learning meaning

Moving on with this article on Regularization in Machine Learning. Intuitively it means that we force our model to give less weight to features that are not as important in predicting the target variable and more weight to those which are more important.

Regularization In Machine Learning Regularization Example Machine Learning Tutorial Simplilearn Youtube

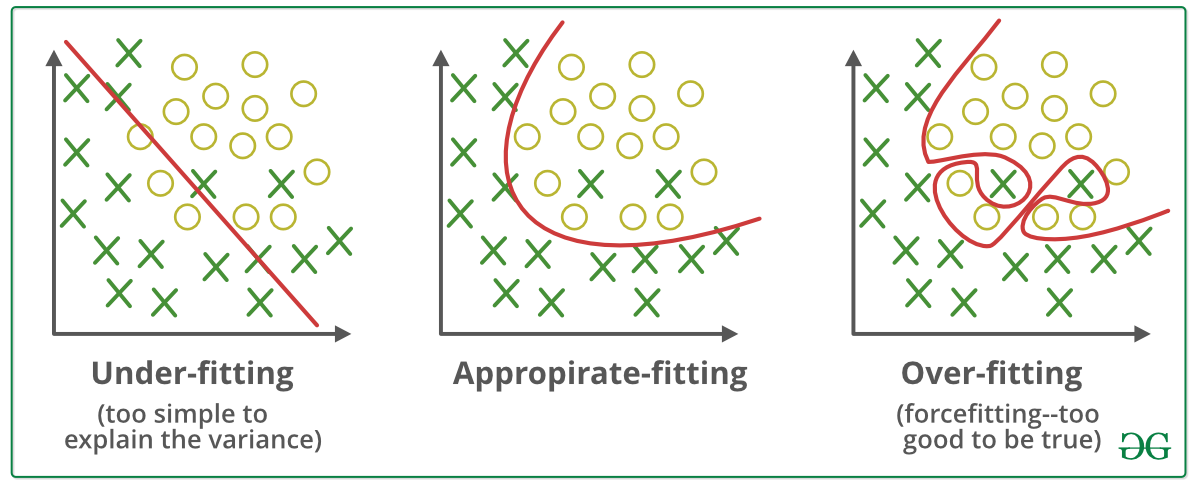

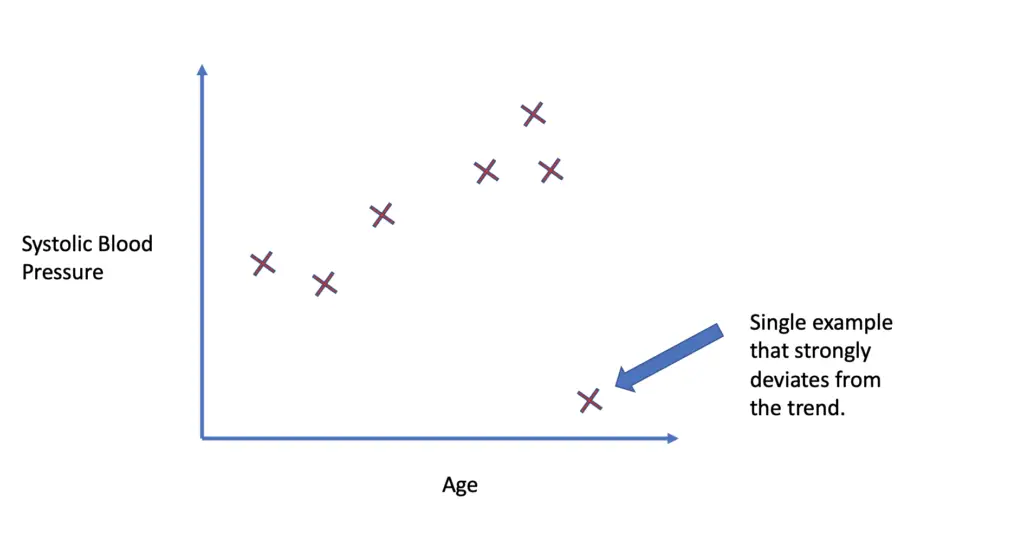

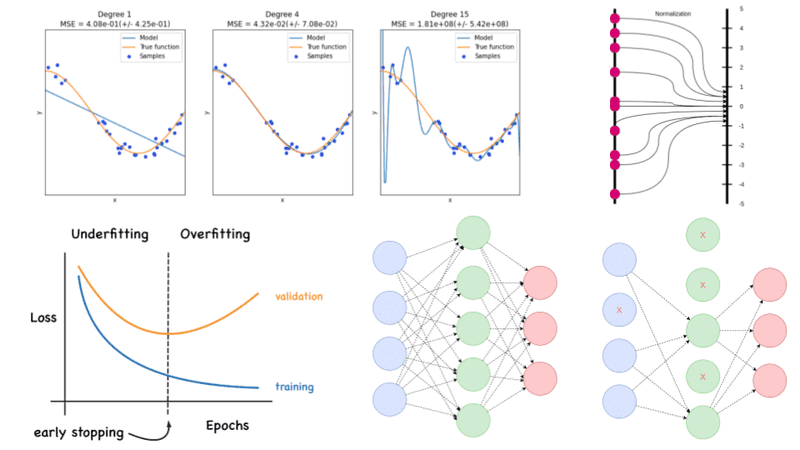

Overfitting is a phenomenon which occurs when a model learns the detail and noise in the training data to an extent that it negatively impacts the performance of the model on new data.

. Regularization is an application of Occams Razor. In other terms regularization means the discouragement of learning a more complex or more flexible machine learning model to prevent overfitting. In machine learning regularization is a procedure that shrinks the co-efficient towards zero.

It is also considered a process of adding more information to resolve a complex issue and avoid over-fitting. Sometimes one resource is not enough to get you a good understanding of a concept. The regularization techniques prevent machine learning algorithms from overfitting.

In other words this technique discourages learning a more complex or flexible model so as to avoid the risk of overfitting. Part 1 deals with the theory regarding why the regularization came into picture and why we need it. Regularization in Machine Learning What is Regularization.

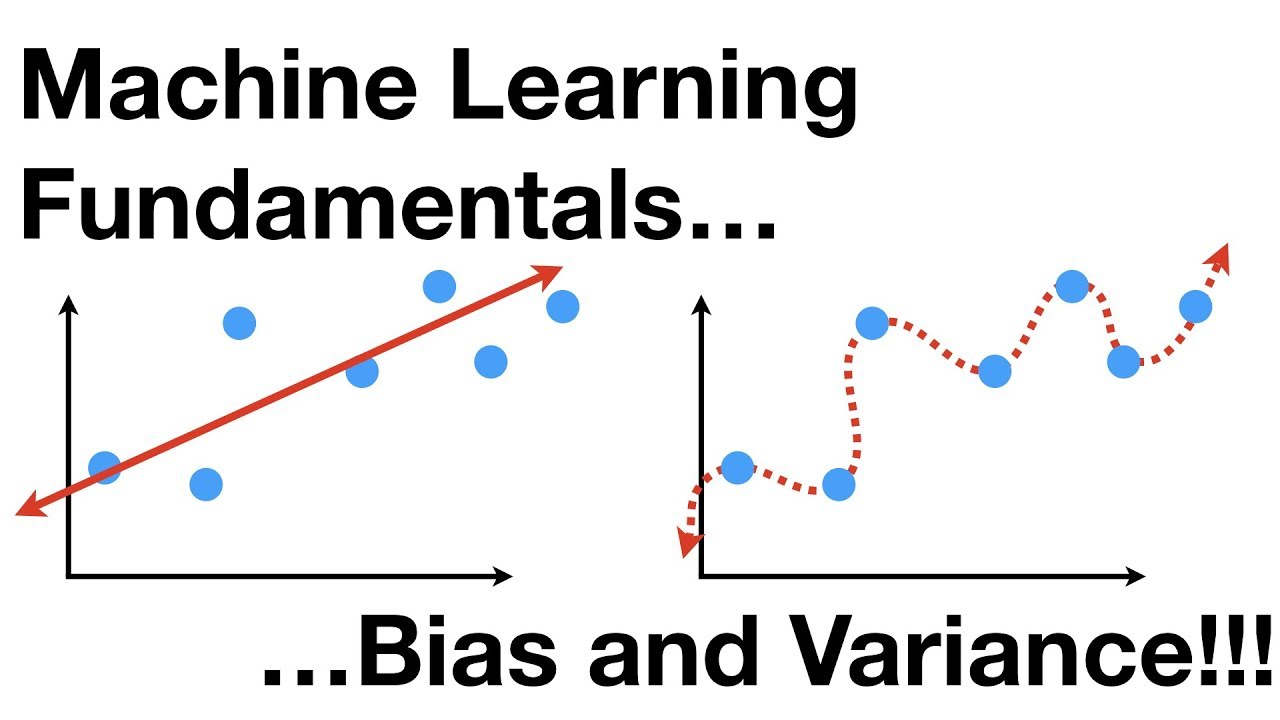

Overfitting is a phenomenon that occurs when a Machine Learning model is constraint to training set and not able to perform well on unseen data. A simple relation for linear regression looks like this. Regularization focuses on controlling the complexity of the machine learning.

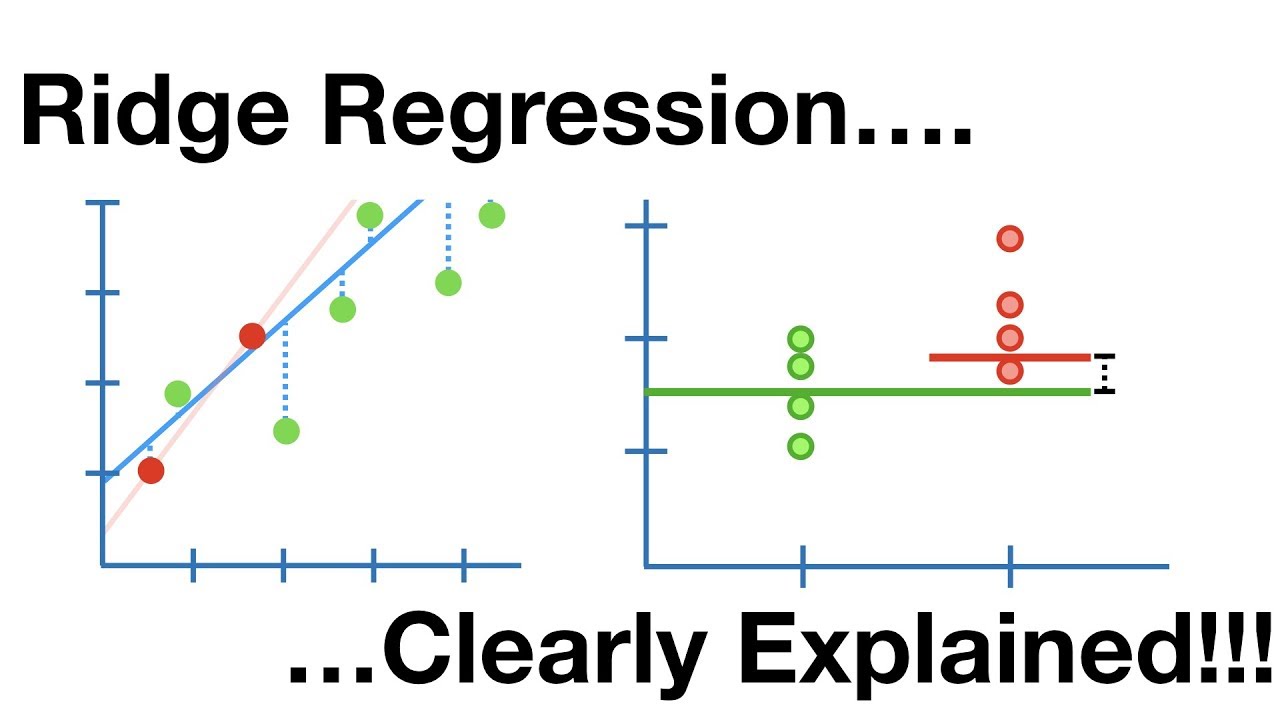

It is very important to understand regularization to train a good model. Both overfitting and underfitting are problems that ultimately cause poor predictions on new data. This is a form of regression that constrains regularizes or shrinks the coefficient estimates towards zero.

Regularization is a type of technique that calibrates machine learning models by making the loss function take into account feature importance. A simple relation for linear regression looks like this. Regularization reduces the model variance without any substantial increase in bias.

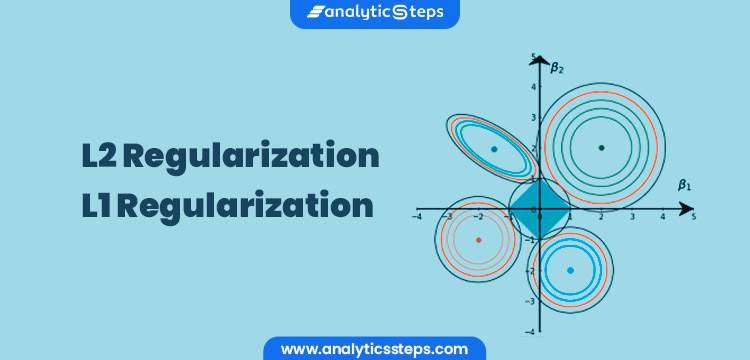

Mainly there are two types of regularization techniques which are given below. Regularization is one of the techniques that is used to control overfitting in high flexibility models. For every weight w.

Sometimes the machine learning model performs well with the training data but does not perform well with the test data. This independence of data means that the regularization term only serves to bias the structure of model parameters. In simple words regularization discourages learning a more complex or flexible model to prevent overfitting.

In machine learning overfitting is one of the common outcomes which minimizes the accuracy and performance of machine learning models. Overfitting occurs when a machine learning model is tuned to learn the noise in the data rather than the patterns or trends in the data. In the context of machine learning regularization is the process which regularizes or shrinks the coefficients towards zero.

What is Regularization in Machine Learning. In other words this technique discourages learning a more complex or flexible model so as to avoid the risk of overfitting. Regularization in Machine Learning is an important concept and it solves the overfitting problem.

Regularization is one of the basic and most important concept in the world of Machine Learning. Dropout is one in every of the foremost effective regularization techniques to possess emerged within a previous couple of years. In machine learning regularization is a technique used to avoid overfitting.

When you are training your model through machine learning with the help of artificial neural networks you will encounter numerous problems. As seen above we want our model to perform well both on the train and the new unseen data meaning the model must have the ability to be generalized. While regularization is used with many different machine learning algorithms including deep neural networks in this article we use linear regression to explain regularization and its usage.

I have learnt regularization from different sources and I feel learning from different sources is very. Regularization helps to reduce overfitting by adding constraints to the model-building process. It is one of the key concepts in Machine learning as it helps choose a simple model rather than a complex one.

This is where regularization comes into the picture which shrinks or regularizes these learned estimates towards zero by adding a loss function with optimizing parameters to make a model that can predict the accurate value of Y. This is a form of regression that constrains regularizes or shrinks the coefficient estimates towards zero. It is not a complicated technique and it simplifies the machine learning process.

This is an important theme in machine learning. It is a technique to prevent the model from overfitting by adding extra information to it. This occurs when a model learns the training data too well and therefore performs poorly on new data.

I have covered the entire concept in two parts. L2 regularization It is the most common form of regularization. Regularization is one of the most important concepts of machine learning.

Regularization refers to techniques that are used to calibrate machine learning models in order to minimize the adjusted loss function and prevent overfitting or underfitting. It is a term that modifies the error term without depending on data. As data scientists it is of utmost importance that we learn.

Setting up a machine-learning model is not just about feeding the data. The regularization term is probably what most people mean when they talk about regularization. Part 2 will explain the part of what is regularization and some proofs related to it.

The fundamental plan behind the dropout is to run every iteration of the scenery formula on. It penalizes the squared magnitude of all parameters in the objective function calculation. It means the model is not able to.

Regularization is a technique which is used to solve the overfitting problem of the machine learning models. Regularization is essential in machine and deep learning. Regularization is a technique used to reduce the errors by fitting the function appropriately on the given training set and avoid overfitting.

To overcome this regularization is a method to solve this issue of overfitting which mainly arises due to increased complexity. Regularization is a method to balance overfitting and underfitting a model during training. It is possible to avoid overfitting in the existing model by adding a penalizing term in the cost function that gives a higher penalty to the complex curves.

Regularization In Machine Learning Simplilearn

Regularization In Machine Learning Geeksforgeeks

Regularization Part 1 Ridge L2 Regression Youtube

Implementation Of Gradient Descent In Linear Regression Linear Regression Regression Data Science

Difference Between Bagging And Random Forest Machine Learning Learning Problems Supervised Machine Learning

Regularization In Machine Learning Programmathically

L2 Vs L1 Regularization In Machine Learning Ridge And Lasso Regularization

Regularization Techniques For Training Deep Neural Networks Ai Summer

What Is Regularization In Machine Learning

Regularization Part 1 Ridge L2 Regression Youtube

What Is Regularization In Machine Learning Quora

Underfitting And Overfitting In Machine Learning

Regularization Of Neural Networks Can Alleviate Overfitting In The Training Phase Current Regularization Methods Such As Dropou Networking Connection Dropout

Regularization In Machine Learning Regularization In Java Edureka

A Simple Explanation Of Regularization In Machine Learning Nintyzeros

Regularization In Machine Learning Simplilearn

Machine Learning For Humans Part 5 Reinforcement Learning Machine Learning Q Learning Learning

What Is Regularization In Machine Learning Techniques Methods